Frans de Jong

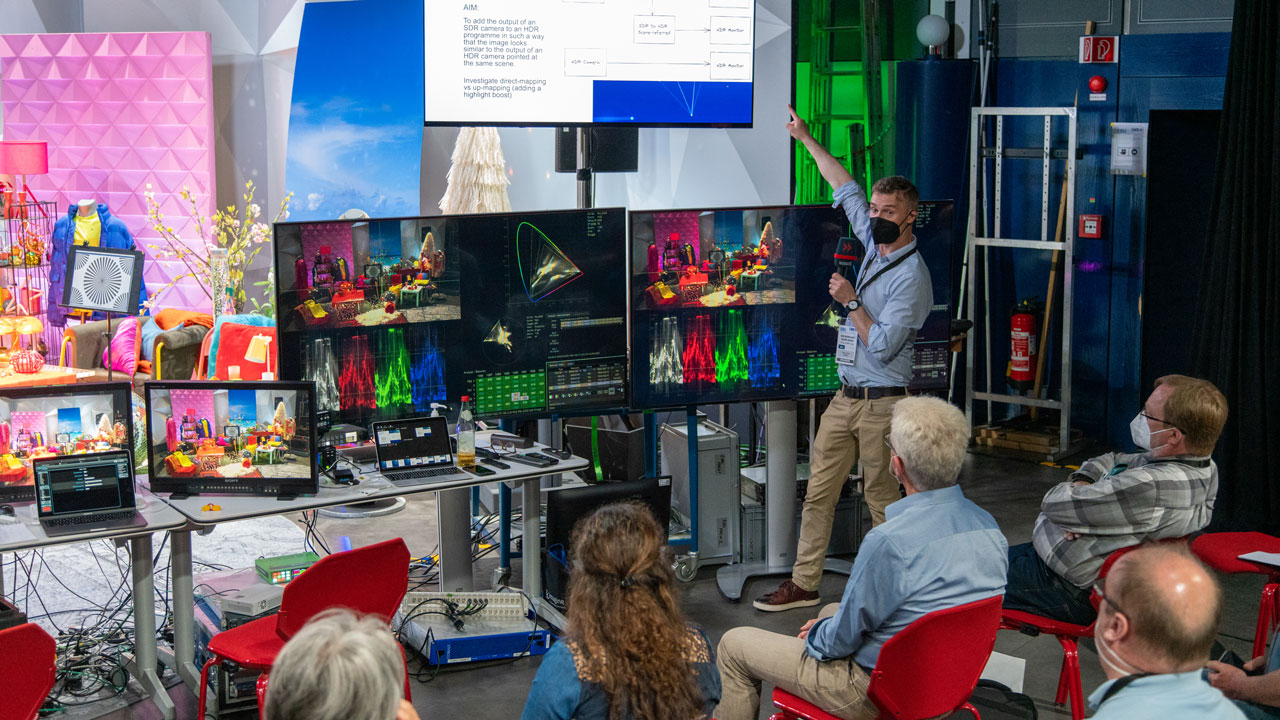

Since the HDR Workshop at the NRK in 2019, the topic of high dynamic range was overshadowed somewhat by the pandemic. Last year, the EBU’s HDR Implementation Task Force decided that spring 2022 would be a good moment to again shine some light on practical HDR aspects. A week-long workshop, primarily for EBU Members, was thus organized and kindly hosted by Germany’s SWR, at its Baden-Baden facilities.

The main goal of the workshop was to learn how to produce HDR, using both live and file-based workflows. For several broadcasters, live HDR production has become common, using workflows that have been optimized to guarantee the quality while limiting the complexity. Andrew Cotton and Simon Thompson (BBC R&D) showed how these workflows are built up, while David Adams (Sky UK) and Andy Beale and Prin Boon (BT Sport) shared their operational experiences.

Looking at SDR to create HDR

One of the main challenges in live HDR production today, which typically correlates with large events, is that the majority of viewers still watch an SDR image, i.e. standard dynamic range. At the same time, establishing complete parallel HDR and SDR workflows is not the solution, as this would vastly increase both the costs and complexity of the set-up. The trick is to ‘downmap’ the HDR into an SDR signal (using a static LUT, look-up table) and to use that SDR signal as the reference for shading the cameras. This so-called ‘closed-loop shading’ approach guarantees the HDR and SDR signals track and that viewers without HDR at home are not treated like second-class citizens.

On the fourth day of the workshop, an industry day was held, where broadcasters spoke with industry partners about the key points to address in equipment and operation. The use of downmappers was one of the aspects raised.

The problem is that when broadcast parties exchange signals and are using different downmappers, it becomes hard or even impossible to guarantee quality downstream. Visual ‘tweaking of the ProcAmp’ (the processing amplifier that can adjust various aspects of a video signal) is not a recipe for success. This scenario is not just academic; it has been faced in the recent past during large live events. At the workshop several ideas to address this issue were proposed and it was agreed to set up an open group to continue the work.

Learn more about the new HDR Downmapping group.

Equipment improvements

Another point made in Baden-Baden was that camera matching can be hard when cameras from different manufacturers are involved. Although at the workshop it was shown that it can be done successfully, having a common starting point for the different devices could help gain time in operation. Such an ‘EBU preset’ would not be intended for broadcast, but a fixed base from which an artistic look can be applied.

Another time-saver would be the more extensive use of video payload identifiers (VPIDs) in video signals. These make sure receiving equipment knows the format of the signal. Questions such as “am I really looking at a BT.709 colour signal?” are then no longer needed. Although improving, it was clear during the workshop that not all equipment supports the VPID (yet). For some devices (e.g. LUT boxes) this is logical, as they may not be able to automatically deduce the signal type. In this case, an option to insert the VPID manually would be a very useful addition.

File-based lessons

Pierre Routhier (CBC/Radio- Canada) led the post-production part of the event. He shared his experience with HDR grading and transformation, including how the extra time available in post-production can help achieve superior results to 3D-LUT based approaches. Current non-linear editing software is very powerful, but correct use of the HDR features can be complicated and prone to bugs. The old adage to ‘fix things in post’ should be seen in a positive light, in the sense that avoiding the application of destructive camera settings (like changing the gain and black levels) is a good idea, to prevent the loss of details and to maintain the flexibility to achieve a different look later.

Producing high-quality images is important, but similar to how audio engineers used to listen to mono car-speakers to check their mix under different conditions, it makes sense to check a professionally graded piece of content on several types of consumer TV sets. As experiments during the workshop showed, their displays have widely different ways of handling HDR content and generally tend to ignore HDR metadata. This means images can vary in terms of white point, black levels, gamut, etc. Checking on a professional monitor first and then on, for example, an OLED and a QLED television will give you the certainty of the quality of what you produce and an impression of what the audience may see. Or as Pierre put it: “Some displays are made to ‘wow and dazzle’, while others follow the standard.”

Next steps

An informal poll at the end of the post-production sessions showed that more than 75% of the participants felt ready to continue with HDR, while a quarter would like more practice and/or training first. This aligns well with the result of a survey held in the first half of the year (available in EBU BPN 128), which showed the vast majority of respondents to be planning UHDTV HDR production for the next 2–5 years. Also in that survey, participants asked for more education on the topic. The HDR Workshop has helped address that need.

The EBU will continue to share HDR experience and best practices. Next, an HDR FAQ based on lessons from the workshop will be provided, a demo for IBC is being prepared, and regular calls of the ‘HDR Downmapping’ group are being held.

A big thank you to all who supported this event!

This article was first published in issue 53 of tech-i magazine.